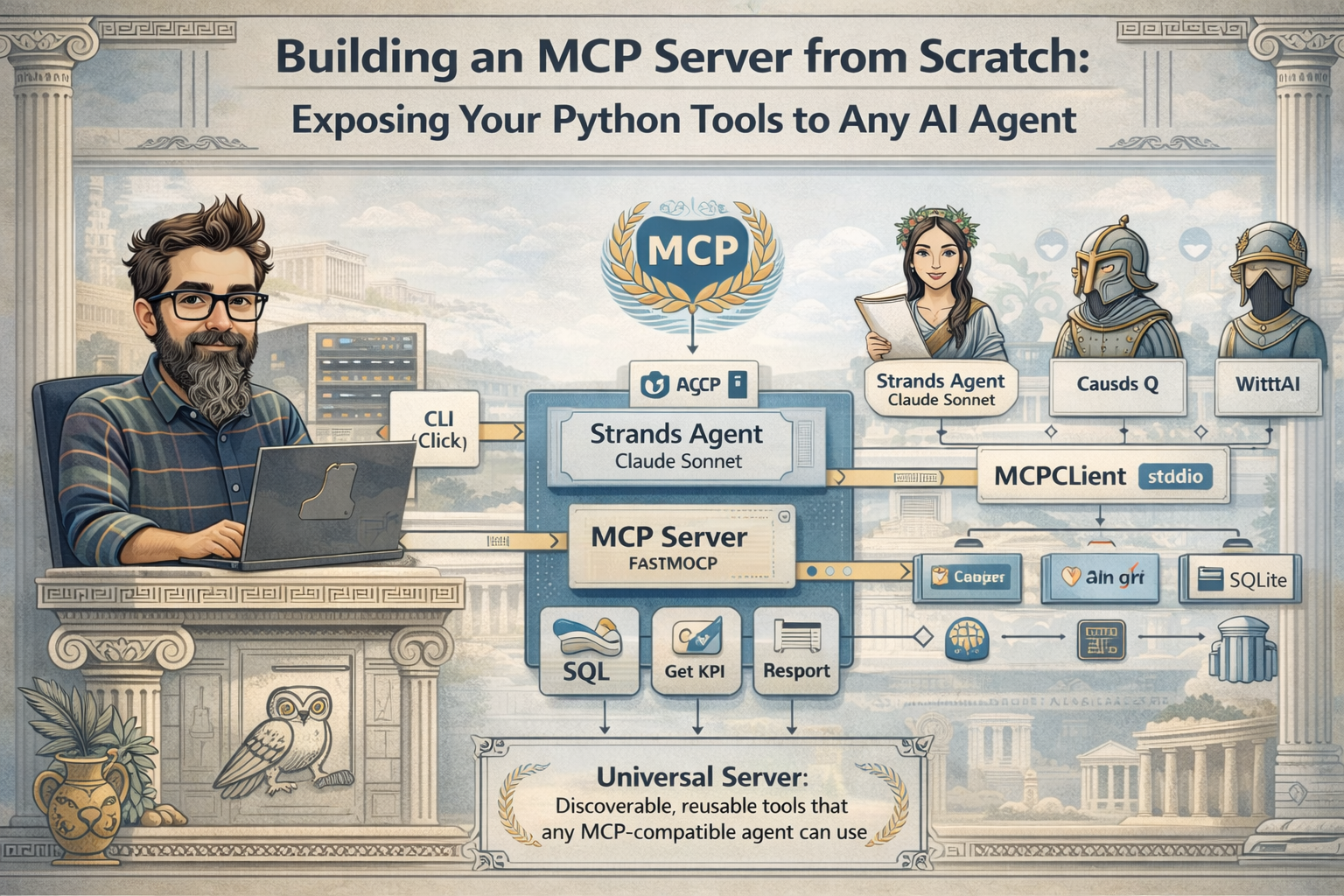

Building an MCP Server from Scratch: Exposing Your Python Tools to Any AI Agent

Every AI agent framework has its own way of defining tools. You write a function, decorate it, and your agent can call it. But what happens when you want a different agent, running in a different framework, to use the same tool? You rewrite it. The Model Context Protocol (MCP) solves this by defining a universal standard: build your tools once as an MCP server, and any MCP-compatible client can discover and use them automatically.

In this project we build a custom MCP server that exposes business tools (SQL queries, KPI calculations, report generation) over a sales database. Then we consume it from a Strands Agent using the native MCPClient integration. The same server could be used from Claude Code, Cursor, Amazon Q, or any other MCP-compatible client without changing a single line.

graph LR

CLI["CLI (Click)"] --> Agent["Strands Agent (Claude Sonnet)"]

Agent --> MCPClient["MCPClient (stdio)"]

MCPClient --> Server["MCP Server (FastMCP)"]

Server --> SQL["sql_query"]

Server --> KPI["get_kpi"]

Server --> Report["sales_report"]

SQL --> DB["SQLite"]

KPI --> DB

Report --> DB

What is MCP?

The Model Context Protocol is an open standard that defines how AI agents communicate with external tools. Instead of each framework inventing its own tool interface, MCP provides a shared protocol: the server exposes tools with JSON schemas describing their parameters, and the client discovers and invokes them over a transport layer (stdio, HTTP, or SSE).

The key insight is separation of concerns. The MCP server knows nothing about the AI model consuming it. The client knows nothing about the tool implementation. They communicate through a well-defined contract, just like a REST API, but designed for agent-tool interaction.

Building the MCP server

The server uses FastMCP, the high-level Python API included in the official MCP SDK. Each tool is a decorated function where type hints and docstrings automatically generate the JSON schema that clients use to understand parameters:

from mcp.server.fastmcp import FastMCP

mcp = FastMCP(name="sales-tools")

@mcp.tool()

def sql_query(query: str) -> str:

"""Execute a SQL query against the sales database.

The database contains three tables:

- customers: id, name, region, created_at

- products: id, name, category, unit_price

- orders: id, customer_id, product_id, quantity, total_amount, order_date

Only SELECT queries are allowed.

"""

return query_sales(query)

@mcp.tool()

def get_kpi(metric: str, period: str = "all") -> str:

"""Calculate a business KPI from the sales database.

Available metrics: revenue, order_count, avg_order_value, top_products,

top_customers, revenue_by_region, revenue_by_category.

Available periods: all, last_month, last_quarter, last_year, 2024, 2025, 2026.

"""

return calculate_kpi(metric, period)

@mcp.tool()

def sales_report() -> str:

"""Generate a comprehensive markdown sales report with key metrics,

top products, regional breakdown, and category analysis."""

return generate_report()

if __name__ == "__main__":

mcp.run()

That's the entire server. The @mcp.tool() decorator does three things: registers the function as an MCP tool, extracts parameter types from the signature to build the JSON schema, and uses the docstring as the tool description that agents read to decide when and how to call it. When you run python server/main.py, it starts listening on stdio for MCP requests.

The business tools

Behind the MCP layer, each tool is a regular Python function operating on a SQLite database.

The SQL query tool validates that only SELECT statements are allowed (no accidental data mutations) and returns results as JSON. If the query fails, it returns the error along with the schema description so the agent can self-correct:

def query_sales(sql: str) -> str:

normalized = sql.strip().upper()

if not normalized.startswith("SELECT"):

return json.dumps({"error": "Only SELECT queries are allowed"})

conn = sqlite3.connect(DB_PATH)

conn.row_factory = sqlite3.Row

try:

cursor = conn.execute(sql)

columns = [desc[0] for desc in cursor.description] if cursor.description else []

rows = [dict(zip(columns, row)) for row in cursor.fetchall()]

return json.dumps(rows, default=str)

except sqlite3.Error as e:

return json.dumps({"error": str(e), "schema": SCHEMA_DESCRIPTION})

finally:

conn.close()

The KPI tool uses a Strategy pattern to route metric names to specific calculators. Each calculator runs an optimized SQL query and returns structured results:

def _calculate(conn: sqlite3.Connection, metric: str, date_filter: str) -> dict:

calculators = {

"revenue": _revenue,

"order_count": _order_count,

"avg_order_value": _avg_order_value,

"top_products": _top_products,

"top_customers": _top_customers,

"revenue_by_region": _revenue_by_region,

"revenue_by_category": _revenue_by_category,

}

calculator = calculators.get(metric)

if not calculator:

return {"error": f"Unknown metric: {metric}", "available": list(calculators.keys())}

return calculator(conn, date_filter)

This design makes adding new KPIs trivial: write a function and add it to the dictionary.

The report tool generates a comprehensive markdown report with overview metrics, top products, regional breakdown, and category analysis. The agent can use this when the user wants a broad summary instead of specific data points.

Consuming the server with Strands Agents

On the client side, we use MCPClient from the Strands SDK to connect to our MCP server. The client starts the server as a subprocess and communicates through stdio:

from strands import Agent

from strands.tools.mcp import MCPClient

from mcp import stdio_client, StdioServerParameters

SERVER_SCRIPT = str(Path(__file__).resolve().parent.parent / "server" / "main.py")

def create_mcp_client() -> MCPClient:

return MCPClient(

lambda: stdio_client(

StdioServerParameters(

command=sys.executable,

args=[SERVER_SCRIPT],

)

)

)

def create_analyst_agent(mcp_client: MCPClient) -> Agent:

bedrock_model = create_bedrock_model(model=Models.CLAUDE_45)

return Agent(

model=bedrock_model,

system_prompt=SYSTEM_PROMPT,

tools=[mcp_client],

)

The MCPClient handles the full lifecycle: starting the server subprocess, performing the MCP handshake, discovering available tools, and converting them into Strands-compatible tool definitions. When passed as a tool to the Agent, all three MCP tools (sql_query, get_kpi, sales_report) become available automatically. The agent doesn't know or care that the tools come from an MCP server rather than local Python functions.

The system prompt positions the agent as a business analyst:

SYSTEM_PROMPT = """You are a business analyst assistant with access to a sales database through MCP tools.

You help users understand their sales data by querying the database, calculating KPIs,

and generating reports. When answering questions:

- Use the sql_query tool for custom queries. The database has tables: customers, products, orders.

- Use the get_kpi tool for standard metrics like revenue, order_count, top_products, etc.

- Use the sales_report tool for a comprehensive overview.

- Present numbers clearly with currency formatting when appropriate.

- Explain trends and insights, not just raw data.

"""

The CLI

The entry point uses Click with two commands: seed to populate the database with sample data, and ask to query the agent:

@click.command()

@click.argument("question", type=str)

def run(question: str):

"""Ask a question about the sales data using the MCP-powered agent."""

mcp_client = create_mcp_client()

agent = create_analyst_agent(mcp_client)

response = agent(question)

click.echo(f"\n{response}")

The MCPClient is passed directly to the Agent, which manages its lifecycle automatically: it starts the server subprocess, discovers the tools, uses them, and cleans up when done. No manual context manager needed.

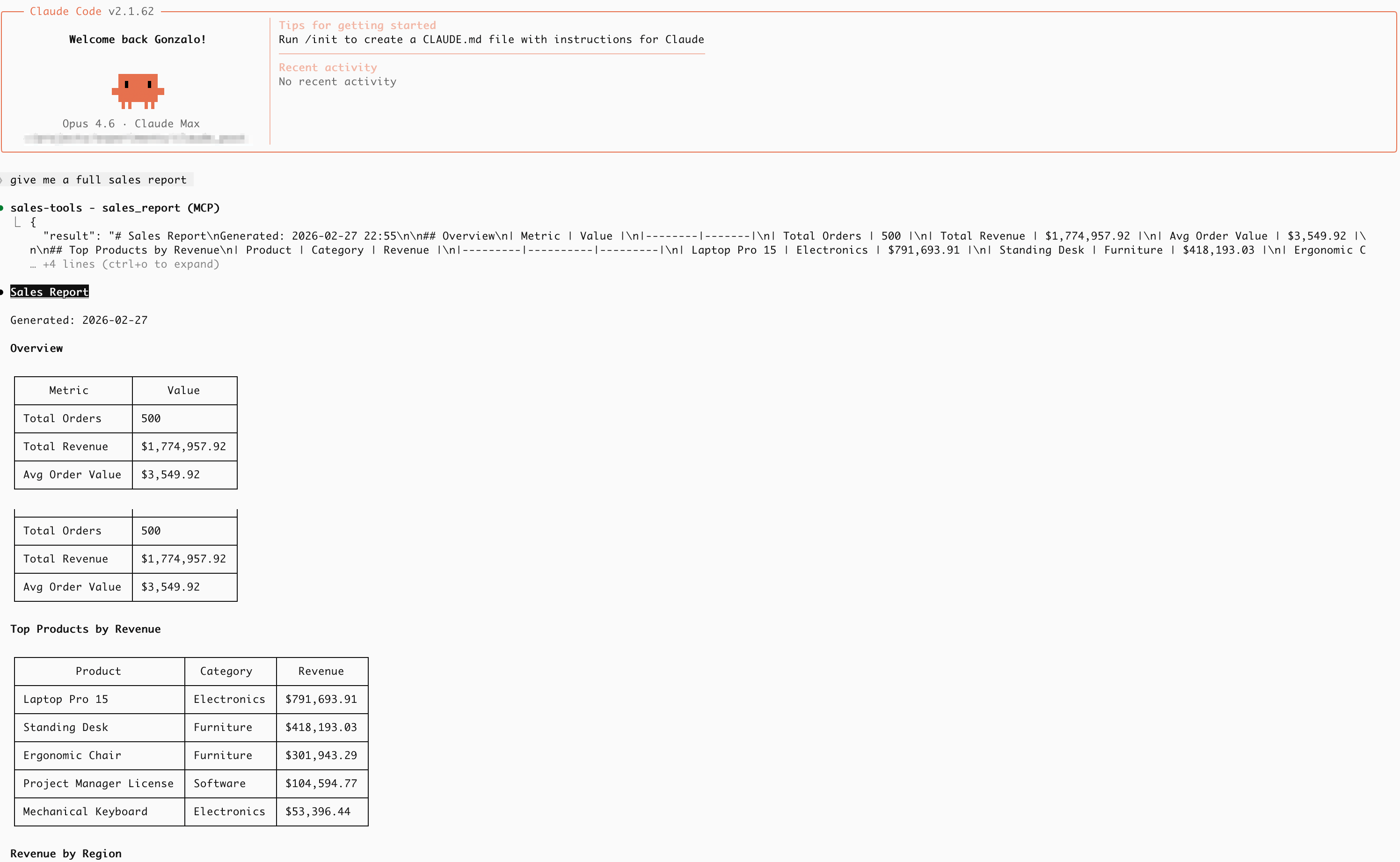

Running it

First, seed the database:

python src/cli.py seed

# Database seeded at /path/to/src/db/sales.db

Then ask questions:

python src/cli.py ask "What are the top 3 products by revenue?"

python src/cli.py ask "Compare sales performance across regions for 2025"

python src/cli.py ask "Generate a full sales report"

The agent reads the question, decides which MCP tool to call (or combines multiple calls), processes the results, and responds in natural language with actual data from the database.

Using it from any MCP client

Here's where MCP shines. The same server we built for the Strands Agent works with any MCP-compatible client, no code changes needed. Every tool that supports MCP (Claude Code, Cursor, Windsurf, Amazon Q Developer, VS Code with Copilot, and many others) uses the same configuration pattern: point it to the server command and it handles the rest.

For example, in Claude Code you create a .mcp.json file in your project root:

{

"mcpServers": {

"sales-tools": {

"command": "/path/to/venv/bin/python",

"args": ["/path/to/src/server/main.py"]

}

}

}

The three tools (sql_query, get_kpi, sales_report) become available directly in the conversation. You can ask things like "What are the top 3 products by revenue?" or "Generate a full sales report" and the client calls the MCP tools against your database automatically. In Cursor or VS Code the configuration lives in their own settings file, but the server is exactly the same.

No adapter layer. No glue code. The client launches the server as a subprocess, performs the MCP handshake, reads the tool schemas, and knows exactly how to call them. You write the server once, and it works everywhere.

The power of interoperability

This is the Adapter Pattern at the protocol level. Our Python tools speak MCP, and any client that speaks MCP can use them. Build once, use everywhere.

This decoupling is what makes MCP valuable in practice. Your internal tools, database queries, API wrappers, domain-specific calculations, become reusable building blocks that any agent in your organization can access, regardless of the framework it's built on. The Strands Agent uses them through MCPClient. Claude Code uses them through .mcp.json. Same server, same tools, different clients.

And that's all. With the MCP Python SDK and Strands Agents, we can build tools that are no longer locked into a single framework. The tools live on the server, the intelligence lives on the client, and the protocol connects them. A standard interface for a world of agents.

Full code in my github account.